|

|

| Funding for this site is provided by readers like you. | |

|

|

|

|

|||||

|

|

|||||||

|

|

|

|

| |

A

Summer School on Animal Sentience and Cognition

| |

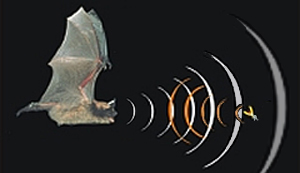

For many reasons, human consciousness is very hard to define. In particular, the kind of problem that it poses for science is very different from that of explaining physical phenomena such as falling objects, photosynthesis, or nuclear fusion. This difference has been characterized in various ways, with a number of different dichotomies. For example, some authors have stressed the private nature of consciousness, which is accessible only from the viewpoint of the conscious subject, whereas physical phenomena are accessible to any observer. Others have stressed the ineffability of consciousness: it cannot be effectively explained in terms of language, unlike physical phenomena, whose properties can be accurately expressed in terms such as mass or temperature. In a 1974 article entitled “What is it Like to Be a Bat?”, the philosopher Thomas Nagel focused on these subjective properties of conscious human experience.

Nagel’s idea was to show that because humans are incapable of echolocation, they will never be able to subjectively feel “what it is like” to orient themselves in this way. Just as we humans, with our sense of sight, perceive not electromagnetic waves in the visible-light spectrum, but rather illuminated objects, bats may perceive their returning echoes not as sounds, but directly as objects. That, however, is something we will never know. And this is exactly what is meant by the subjective side of conscious experience, compared with its objective side. In the bat’s case, the objective side is the acoustical physics of echolocation, which we can describe and understand, unlike its subjective side, which we cannot. Nagel therefore concludes that science has taught us many things about how a bat’s brain functions, but not “what it is like” to be a bat. This subjective aspect of “what it is like” to have any given conscious state is also referred to as the phenomenological aspect of consciousness. A related term, qualia (the plural of qualium or quale), more specifically designates all of our direct impressions of things. Qualia are the immediate experiential aspects of sensations—to offer some crude examples, the particular redness of the red of an apple, or the coldness of ice. Some authors even extend the concept of qualia to our most basic thoughts and drives. The problems that qualia pose for the scientific study of consciousness have led the philosopher David Chalmers to distinguish what he calls the “hard problem” of consciousness from the other, “easy” problems.

|

| |||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||

|

How can we explain the subjectivity of human consciousness, or, to use Thomas Nagel’s phrase, “what it is like” to be ourselves. Or, as David Chalmers would put it, how do we solve the “hard problem” of consciousness? Today’s neuroscientists are trying to provide solutions to this problem, but philosophers have been grappling with it for centuries. Perhaps the two schools of philosophy that have had the most to say on this subject are dualism and materialism.

But subsequently, substance dualism has been shaken to its very foundations by questions about what its detractors such as philosopher Gilbert Ryle have called the myth of the “ghost in the machine”. For example, if the human body is a physical machine piloted by a non-physical ghost hiding somewhere inside the human skull, where exactly is that ghost hiding? And is there only one such ghost, or are there many? Who animates the ghost itself, and through what force does this ghost affect the physical world? In an attempt to retain the advantages of having two separate entities, but avoid the pitfalls of substance dualism, philosophers have developed several variants of dualism. These include:

The other major school of philosophy that has has something to say about consciousness is materialism. For materialists, the causal relationship between our mental states and our behaviours does not pose any problem, because both are part of the physical world. A subjective experience such as pain is quite real, but simply consists of the neuronal states that give rise to it.

Thus, for the proponents of the dual-aspect theory, your brain can appear to you as something physical when you regard it as an object from the outside, but as something “mental” when you, as a subject, examine it from the inside (by introspection). Just as physicists can speak of light as a wave and a particle simultaneously, we can regard the body and the mind as simply the two sides of the same coin. The age-old distinction between mind and body may therefore have been nothing more than an artifact of perception. Neuropsychoanalysis, a movement that tries to combine data from the neurosciences and psychoanalysis to achieve a better understanding of human consciousness, is based on dual-aspect theory. The second main materialist interpretation of the nature of the mind is psychophysical identity theory. This theory postulates that there is an identity between a person’s conscious states and the physical states of his or her brain. In psychophysical identity theory, unlike in dual-aspect theory, the subjective and objective natures of consciousness cannot be regarded as two different aspects of the same thing, because they are one and the same thing. In other words, mental states can be completely reduced to physical ones, just as water can be reduced to its chemical formula H20. The problem then, of course, is to explain how the objective and the subjective, the brain and the mind, can be identical when they seem so different. Two different forms of identity, “type to type” identity and “token to token” identity, have been proposed. They lead to two variants of reductive materialism, one based on identity in the stronger sense and the other on identity in the weaker sense. Eliminative materialism is even more radical than the two forms of materialism just discussed. Like materialist functionalism, eliminative materialism seeks to circumvent the difficulties inherent in materialism while accepting its basic premise: that matter is the only thing that exists. Lastly, the mysterian school of philosophers, whose best known representative is Colin McGinn, are non-materialists who think that the problem of human consciousness simply surpasses human understanding. They refuse to believe that our subjective vision of colours, for example, is simply identical to the activity of a population of neurons in certain areas of the cortex. At the same time, however, these philosophers do not want to return to dualism. They therefore argue that consciousness is a mystery, and that it is a mystery because our concepts of the mental and physical world are too crude to address the problem of the relationship between the body and the mind in an enlightening way. It is somewhat the same as the reason that monkeys will never perform differential calculus: it would require concepts that are inaccessible to their brains. In fact, every species has limits to its cognitive abilities, and understanding consciousness may just require some concepts that humans simply cannot access. But

the materialists say that the mysterians have given up too fast and that they

base their conclusion on nothing more than their disbelief in the possibility

that the brain’s grey matter can constitute the world in the bright colours

that we experience every day. Some materialists believe that one way to make the

identity between consciousness and matter less counterintuitive is to apply new

concepts from the cognitive

neurosciences to our thinking about the phenomenological

aspect of consciousness. |

A Microprocessor That Simulates a Synapse

|

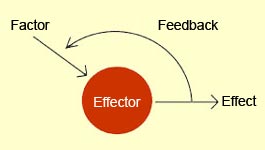

From 1946 to 1953, when the field of psychology was still dominated by behaviourism, the Macy Foundation sponsored a series of conferences in New York City and at Princeton University, in New Jersey. These conferences were attended by specialists from many disciplines, ranging from mathematics to psychology to anthropology, sociology, and neurobiology. The scholars such as Wiener, Shannon, McCulloch, von Foerster, and von Neumann who regularly attended these conferences strongly advocated that they take a multidisciplinary approach, which proved highly productive. What are now known as the Macy Conferences gave rise to the cybernetics movement. Now defined as the general science of communication and control in natural and artificial systems, cybernetics studies how information circulates.

Many biologists, such as Henri Laborit and Henri Atlan, were greatly influenced by concepts of cybernetics. This new science also quickly found applications in computing, then in its infancy, as well as in what would later become known as artificial intelligence (see sidebar). The cyberneticists were also clearly interested in investigating the complex system par excellence: the human mind. And because they rejected all forms of idealism and shared a strong inclination toward materialism, they quite naturally included the study of the brain in their two approaches to complex systems:

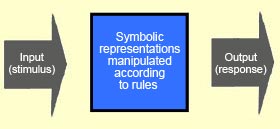

These two approaches tended to complement rather than contradict one another. They gave rise to the two main currents that developed subsequently in the cognitive sciences: cognitivism and connectionism, respectively. The computers developed during World War II, though still very slow, were a great source of inspiration for the cognitivist (also known as the computational) approach. The classic use of the computer as a metaphor for the human mind (though we now know its limitations—follow the Tool Module link to the left) thus led the cognitivists to believe that the mind translates the components of the external world into internal representations, exactly as a computer does.

This central paradigm of cognitivism dominated the cognitive sciences from the mid-1950s for almost 20 years. As Jerry Fodor, a student of Hilary Putnam, couched the argument, to think is to manipulate symbols, and cognition is nothing more than manipulating symbols the way that computers do. This premise and Fodor’s research inspired the various functionalist approaches, according to which the mind is organized into specialized modules that can be implemented on platforms other than computers. This is the famous concept of “multiple realization”. Once mental states had been equated with computer software and the brain with computer hardware, computer simulation and modelling became an ideal means of studying how the human mind operates. This field of inquiry came to be known as “artificial intelligence”, or AI (see sidebar). The philosopher John Searle distinguished two positions regarding the possibilities of AI. According to the “strong AI” position, to be intelligent, all a machine needed was the right program. But Searle dealt this position a harsh blow with his Chinese Room argument. According to the “weak AI” position, computers can only simulate the human mind. No matter how much computational power they have, they can never create a true intelligence or a genuine consciousness. Meteorologists’ computers, though they may be able to simulate the development of hurricanes with great accuracy, are never going to soak us to the skin or flatten our homes. Cognitivism, inspired by the operation of computers that manipulate symbols without interpreting their meaning, is forced to reduce the brain to a simple syntactic device, and not a semantic one. Epistemologically speaking, this position is vulnerable to attack from many angles. It was in this context that connectionism, the other major current in the cognitive sciences, developed in the 1980s.

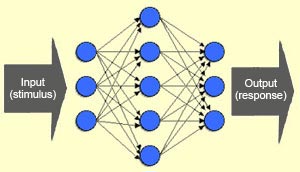

Thus connectionism is a “bottom-up” approach. It is associated with philosophers such as Daniel Dennett and Douglas Hofstadter. In connectionism, mental representations are not discussed in terms of symbols, but instead are analyzed in terms of links among numerous distributed, co-operative, self-organizing agents. Marvin Minsky, who inspired this approach, thus regards the cognitive system as a society of micro-agents that are capable of solving problems locally. Connectionists therefore believe that the analysis must penetrate down inside symbolic operations, to the “sub-symbolic” level. In contrast to the computing analogy used in cognitivism, connectionism does not depend on complex algorithms that are executed sequentially, or on a control centre that processes all the information, because the networks of neurons in the brain are considered quite capable of doing without them. What is special about the brain’s neural networks, however, besides their distributed mode of operation, is that the effectiveness of the connections among them is altered by experience. Connectionism was thus inspired directly by Hebb’s rule, which states that when two neurons tend to be activated simultaneously, their connections are strengthened; when the opposite is true, then their connections become weaker. The connectivity of a system thus becomes inseparable from the history of its transformations. And cognition becomes the emergence of global states from the application of simple rules (such as Hebb’s rule) to a network of elements that are just as simple, but very numerous and highly interconnected. The big difference, compared with cognitivism, is that connectivism regards the networks of neurons as being not programmed, but trained (see box below), and regards a mental representation as a correspondence between an emergent global state and properties of the external world.

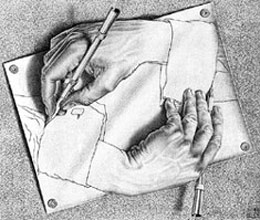

In other words, with the brain, the results of processes become the processes themselves. Our cognitive processes are regarded not as representing a world that exists independently, but as causing the emergence of a world as something inseparable from the structures that embody the cognitive system. This perspective has led some researchers to seriously question whether there even is a pre-existing world from which the cognitive system extracts information. For example, in response to this tenacious metaphor of a cognitive agent that could not survive without a map of an external world, Francisco Varela developed his theory of enaction.

| |||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||

Bluffing in Interrogations Leads to False Confessions The Return of the Invisible Gorilla Will You Be the Same Person in 10 Years As You are Now? Seeing without knowing it : the strange phenomenon of blindsight

|

The development of the neurosciences as a major discipline within the cognitive sciences has gradually revealed the flaws in the classical model of consciousness. The neuroscientific data provided by brain-imaging experiments and many other experiments do not remotely agree with the idea that all of our mental processes are consciously accessible to us, or that our consciousness springs from a point in our brain as the result of our transparent perception of the world, or that the intentions that we can access consciously are sufficient causes for our behaviours. For example, philosopher Daniel Dennett writes: “The idea of a special center in the brain is the most tenacious bad idea bedeviling our attempts to think about consciousness.” Dennett’s model of “multiple versions”of consciousness shattered the illusion of what he calls the Cartesian theatre.

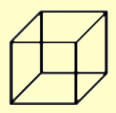

The first phenomenon that we will examine involves situations where the conscious perception changes while the stimulus presented does not. As we shall see, such cases of rival perceptions pose problems for the classical model of consciousness. The phenomenon of binocular rivalry is an example of rival perceptions. In the experimental protocol used to study binocular rivalry, the subject looks into a special pair of binoculars in which each eyepiece presents a different image to each eye. This is a very artificial situation, because it not only separates the fields of vision of the two eyes but also presents them with differing information. Under these conditions, the subject’s subjective perception will alternate between two states: sometimes the subject will see the image presented to the left eye, and other times the image presented to the right. This would be a pretty strange result, if the classical model were correct in describing conscious perception as a “transparent window on the world”. Which world would be the real one here: the one seen by the left eye, or the one seen by the right?

Scientists have also discovered some situations where the reverse occurs: the conscious perception doesn’t change, even though the stimulus does. This phenomenon, known as change blindness, sheds further doubt on the classical model’s unitary, detailed view of our consciousness of the world. It is true that when you look at a landscape, you have the impression of being aware of the entire scene in all its rich detail. And it is also true that if something appears in or disappears from the scene, you notice it immediately. The human visual system is indeed very sensitive to anything that creates an impression of movement in the scene, such as the appearance or disappearance of an object as in the animation below. When such an event occurs, you immediately turn your glance in the direction of the change, to try to identify it.

The fact that the mask makes it so much harder to identify what is changing in the scene suggests that, contrary to what the classical model of consciousness would have us believe, at any given moment we are processing only a small proportion of a visual scene consciously. We are thus never actually forming a detailed visual representation of the entire scene. Some neurobiologists believe that the reason we have this illusion of being fully conscious of the entire scene is that we know that at any time, we can shift our attention from one point to another in the scene to check the details. According to these scientists, we are in a sense using the world itself as a form of external memory. We are also, in their view, processing the entire scene at all times, but only at a preconscious level that would let us identify certain details in it consciously if we wanted to. Lastly, the sidebar on the research done by Daniel Simons shows that change blindness can also be observed outside the laboratory, in particular interpersonal situations. Optical illusions are another common phenomenon that does not fit at all with the idea of consciousness as a faithful reflection of the reality that surrounds us. The very essence of an optical illusion is to give us a conscious perception that is incorrect, and hence different from reality. One can readily see the problem that this raises for the classical model of consciousness.

But curiously, though our conscious perception of the size of the two chips may be influenced by this optical illusion, that is not the case for the actions that we direct toward them when they are presented as 3D objects through the effect of perspective, as in the image below.

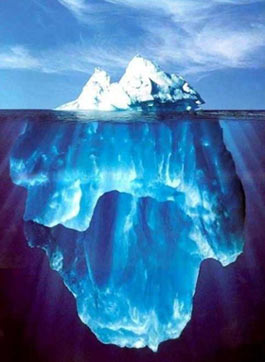

This result indicates that “visual perception” and “visually-guided action”can be dissociated from each other. In other words, behaviours such as picking up an object are not misled by the erroneous conscious perception. It follows that these behaviours must be controlled by processes that escape consciousness. Another example of this phenomenon is the old joke that if you want to ruin your opponent’s concentration in a tennis match, for example, all you have to do is compliment him on the accuracy of his serve, the smoothness of his return, and so on. Usually, that will make him self-conscious. He will start trying to use conscious movements to match the perfect accuracy of the movements that he makes unconsciously from years of constant practice, and he’ll end up sending the ball into the net! The existence of an unconscious aspect of vision is also revealed in spectacular fashion by the phenomenon of “blind vision”. People who have blind vision have suffered damage to either their left or their right primary visual cortex and have consequently lost their sight in the opposite visual hemifield. But in experiments where a light stimulus is sometimes presented to such people’s blind hemifield and sometimes not, and they are asked to “take a chance” each time and say whether or not such a stimulus was present, they will respond accurately much more often than would happen at random! And when they are told how accurate their answers have been, they remain incredulous, convinced that they must have made random lucky guesses, because they say that they had seen nothing at all in that part of their visual field. People with blind vision thus have some surprising residual visual capabilities. These capabilities appear to be made possible by the subcortical visual structures and by some neural pathways that lead directly from the lateral geniculate nucleus to visual areas V4 and V5, without passing first through primary visual area V1. Thus, though the primary visual areas seem to be essential for conscious vision, there are a number of vision-guided behaviours that do not seem to require any conscious control. But how is this possible? Isn’t consciousness suppose to arise first, and action flow from it after that? Yet another chink in the armour of the classical model of consciousness! And there are more. Just as with perception, there are entire areas of learning and memory that take place outside the realm of consciousness. First of all, at any given time, most of our memories are unconscious. We can remember them consciously, but they spend most of their time as unconscious traces in our nervous systems. Second, there are the numerous “implicit” forms of memory . Simply acquiring a particular skill, such as bicycle riding or touch typing, involves procedural memory that we cannot access consciously. The same is true for the priming effect, in which past exposure to a relevant piece of information influences our cognitive processes without our even realizing it (see sidebar). For example, if you are given a long list of words to memorize, and one of these words recurs several times in the list, you will find it easier to recall this word, even if you didn’t consciously notice that it occurred more often than the others. (A good portion of advertising is based on this principle of unconscious preferential recognition.) Studies of people with amnesia also have shown the great autonomy of this implicit memory system, which is often preserved despite a loss of explicit memory. The subjects in these studies, such as the famous patient H.M., would be presented every day with a problem such as the Tower of Hanoi puzzle, and every day they would say that this was the first time they had ever tried to solve it, but nevertheless, they would find the solution a bit more quickly every day. It thus seems clear that we accomplish a multitude of tasks unconsciously, and that these unconscious processes are far more numerous than our conscious actions. Language might be cited as a final example which also shows that the two kinds of processes, conscious and unconscious, can be at work simultaneously. Because if you think about it, when you are having a conversation, you are forming conscious thoughts as the same time as you are using the syntax and vocabulary of your mother tongue completely automatically. Given these many manifestations of unconscious processes, we can therefore, as a first approximation, distinguish not one but two sub-systems. The first one is conscious, often verbal or visual, and operates serially (“You can’t think of more than one thing at a time.”). The second is largely unconscious, often affective, and responds to stimuli automatically. It is composed of numerous units operating as massively parallel processors, so that it has a much greater processing capacity. The demonstration that the majority of our cognitive processes are in fact unconscious is regarded as a veritable revolution that has ended the reign of the classical model of consciousness. This unconscious part of our minds, which is also far more “intelligent” than had previously been believed (see sidebar on difficult choices), continues to amaze scientists with the diversity of its processes: mental and sensorimotor automatisms, implicit knowledge and even implicit reasoning, semantic processing, and so on. But these two sub-systems, the conscious and unconscious, do not suffice all on their own to manage the complexity of the real world that is so vastly underestimated by the classical model of consciousness. They are therefore supported by another system, composed of what are called our attentional processes.

|

|

To develop neurobiological models of consciousness, scientists start by looking for what are called neural correlates of consciousness. This consists in identifying variations that always occur in the activity of certain specific groups of neurons when a particular piece of conscious content appears. For example, some experimental protocols commonly used to identify neural correlates of conscious visual perception are binocular rivalry, change blindness, and bistable images (images that can be interpreted in two different ways). True neurobiological models of consciousness must be distinguished from such simple neural correlates of consciousness. True, identifying correlations between the activity of certain groups of neurons and certain subjective or phenomenological properties of consciousness can help to define what is plausible when one is developing a model. But the identification of such correlations does not automatically produce a comprehensive explanation that relates these neuronal activities to the phenomenon of consciousness. To produce such explanations requires more general models that try to explain the many facets of consciousness by combining data from all branches of the contemporary cognitive neurosciences. Most of these models began to be developed in the early 1990s, in the wake of the first international conferences devoted essentially to the study of consciousness, like the one announced in the poster below.

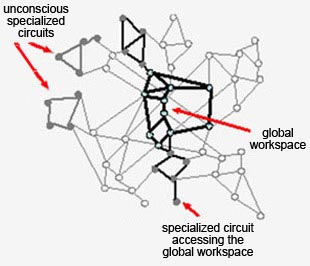

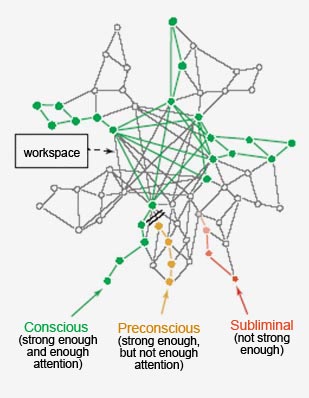

Some of these models about how consciousness operates take its psychological properties as their starting point. Others were developed on the basis of the brain structures that seem to play an important role in consciousness. Still other models are centered on the activity of the neurons themselves, in particular the timing of the discharge of nerve impulses. These models also develop some concepts that are specific to their level of analysis. But because all of these models are intended to be firmly anchored in the neural substrate of consciousness, it is not surprising to see the same concepts recurring in a variety of them. Of course, these models may subtly distinguish their use of these concepts or partially redefine them, but increasingly, the explanatory power of several of these concepts is being confirmed. So before we give a brief overview of some of the major neurobiological models of consciousness, here is an equally brief presentation of their main concepts. For Daniel Dennett, consciousness is about “fame in the brain”. At any moment, thousands of mental objects are forming and dissolving everywhere in the brain as they engage in a Darwinian competition with one another. The “self” might be regarded as what emerges from this competition. Thus, at any given time, there are many possible conscious states, but only one of these multiple versions will get its moment of glory and become “famous”, i.e., conscious, for the space of a second. According to this model, consciousness cannot be located precisely in time or in any particular part of the brain, which thus totally excludes classical, “Cartesian theatre” models of consciousness . Another recurring concept in neurobiological models of consciousness, and one with many variants, is the “global workspace”. Originally developed by psychologist Bernard Baars, this concept is based on the observation that the human brain comprises several specialized systems (for perception, attention, language, etc.), each of which carries out its task at a level that does not reach the threshold of consciousness.

This neuronal workspace, according to Baars, thus serves as a site for information exchange. Other subsystems can then take advantage of this available information too, and it is this availability that constitutes consciousness, while the information processed by the subsystems in isolation remains unconscious. This conception of consciousness as something akin to a form of momentary working memory also provides an account of the interaction between conscious and unconscious processes that is observed in various phenomena. Jean-Pierre Changeux and Stanislas Dehaene took the concept of the global workspace one step further by defining a neuroanatomical basis for it—a sort of “neural circuit” for the conscious workspace. Their model was based on the pyramidal neurons of the cerebral cortex, with their long axons that can connect areas of the cortex that are distant from one another. Changeux and Dehaene attempted to describe the various states that can be observed in this connectionist model of consciousness and then tried to identify the mechanisms that let the mind pass from one of these states to another. In contrast to Baars’s model and to several other brain-imaging studies that simply distinguished one conscious state from multiple unconscious ones, Changeux and Dehaene’s model distinguishes three different possible states of activation:

Francis Crick and Christof Koch also examine the neural correlates of consciousness, but their emphasis is on the circuits of the visual system. For Crick and Koch, the key to conscious processes lies in the synchronized neuronal oscillations that occur in the cortex at frequencies around 40 Hertz (35 to 75 Hz). Various visual areas of the brain that respond to various visual characteristics of the same object (form, colour, movement, etc.) do in fact fire synchronously at a particular frequency. And if there is another object located just beside the first one in the person’s field of vision, then other neurons in that person’s visual areas also fire synchronously, but not at the same moments as the neurons associated with the first object. According to Crick and Koch, it is from this temporal synchronization of the oscillations of neuronal activity that conscious perceptual units arise. This possible answer to the famous binding problem—how the brain combines the various sensory parallel processing modules —is now widely accepted as a working hypothesis. Rodolfo Llinás focuses on a global form of neuronal synchronization that might prove essential for determining which particular perception becomes conscious. According to Llinás, the thalamus triggers cortical oscillations that sweep the brain from front to back in 25 milliseconds—in other words, 40 times per second, the same 40-Hz frequency that has often been associated with the conscious perceptual unit. Thus, in addition to the cortical oscillations that bind the various aspects of a perceived object together, there would be this second type of synchrony between a given neuronal assembly and these non-specific thalamic oscillations. The assembly that is in phase with these non-specific oscillations would then be the one that becomes conscious. Gerald Edelman accords less importance to the specific activity of certain neurons than to the general organization of the brain’s circuits. He starts from the premise that consciousness has not always existed and that it appeared at some time in the course of the evolution of species just as it appears at a given time in the development of individual human beings. Edelman then attempts to identify the new brain architectures that led to the emergence of consciousness. According to Edelman, a selective mechanism that he calls “neural darwinism” (follow the Tool Module link to the left) creates a system of neural maps composed of neuronal assemblies that are responsible for our various perceptual abilities. When the brain receives a new stimulus, several of these maps are activated and send signals to one another. Edelman uses the term “re-entrant loops” to designate this pattern of interconnections among the various neural maps. The reciprocal connections between the thalamus and the cortex, also known as thalamocortical loops, are central to this model of “re-entrant maps” whose looping operation constitutes the starting point for consciousness, according to Edelman. Thus, in Edelman’s view, consciousness is associated not with a permanent anatomical structure, but rather with an ephemeral activity pattern that is present at various locations in the cortex where these re-entrant loops permit. That is why Edelman and his colleague Giulio Tononi instead describe these conscious processes in terms of a “dynamic core”. A dynamic view of consciousness is also taken by Walter J. Freeman, who uses the mathematics of non-linear dynamics to interpret the neuronal oscillations associated with conscious phenomena. According to Freeman, the brain responds to changes in the world by destabilizing its primary sensory cortexes. The resulting new, chaotic oscillation patterns give the impression of being noise, but actually hide an underlying order from which new meanings are constructed continuously. Consciousness thus plays the role of an operator who modulates these cerebral dynamics. Residing both nowhere and everywhere, this operator is continuously re-forming conscious contents that are supplied by the various parts of the brain and that undergo the rapid, extensive changes that we associate with human thought. But conscious thought and the decisions that arise from it do not involve abstract reasoning alone. For Antonio Damasio, one cannot speak of consciousness without including the constant monitoring of an affective loop in which the brain and the body engage in a continuous dialogue (via the autonomic nervous system, the endocrine system, etc.). Damasio champions the idea that our conscious thoughts depend substantially on our visceral perceptions. For him, consciousness develops through the brain’s monitoring of internal somatic states (notably via the insula), and this monitoring has evolved because it lets us uses these somatic states to mark, or evaluate, our external perceptions. Damasio thus uses the concept of somatic markers to describe how the emotions of our inner world interact with our perceptions of the outside world. One last concept, which gives the body and the environment an even more extensive role in the genesis of conscious processes, is called enaction. It was developed by Francisco Varela and is part of the intellectual current known as embodied cognition. The central idea of enaction is that the cognitive faculties develop because the body interacts with a given environment in real time. From the enactive perspective, perception has nothing to do with passive reception but is inextricably linked with the way that the body/brain system guides its own actions in the local situation of the moment. In the terminology of enaction, our senses enable us to “enact” meanings—in other words, to modify our environment while also being constantly shaped by it. The essence of cognition and consciousness therefore cannot be found in representations of a world completely external to ourselves, nor solely in a particular neuronal organization, but instead depends on all of the organism’s sensorimotor structures and its capabilities for bodily action, coupled with a particular environment. These numerous concepts derived from neurobiological models of consciousness let us take the findings of the neurosciences into account in developing our understanding of consciousness. As for the explanations of why consciousness exists, they are at least as numerous. |

| |

|

|

|

|

|

|

|

|